Introduction

The future of AI by 2030 will probably look less like one dramatic moment and more like a steady operational shift. We are already seeing the early pattern: frontier model quality keeps improving, AI is entering daily products, enterprise adoption is broadening, and leaders are starting to redesign workflows rather than just test isolated tools.

This article was reviewed against official and institutional sources available on March 29, 2026, including Stanford HAI, the World Economic Forum, UNESCO, the IMF, the ILO, and McKinsey research. The predictions below are reasoned inferences from those signals, not guarantees.

What "the future of AI" actually means

When people discuss the future of artificial intelligence, they often mix together several different questions. One is about capability: what models will be able to do. Another is about deployment: where AI will be used in real systems. A third is about economics: who captures value and who pays the operational cost. A fourth is about governance: what becomes acceptable, auditable, safe, and legally defensible.

That is why the future of AI is not only a model story. It is also a software architecture story, a procurement story, a policy story, a workforce story, and a change-management story.

Why this matters now

Most teams still have time to prepare, but not much time to stay passive. Stanford HAI's 2025 AI Index notes that AI is increasingly embedded in everyday life, from healthcare to transport, while McKinsey's 2025 survey says 88% of respondents report regular AI use in at least one business function. Yet the same survey also shows most organizations are still early in scaling.

Practical implication: the competitive gap by 2030 may not come from who experimented first. It may come from who built evaluation, process redesign, data readiness, and governance early enough to scale responsibly.

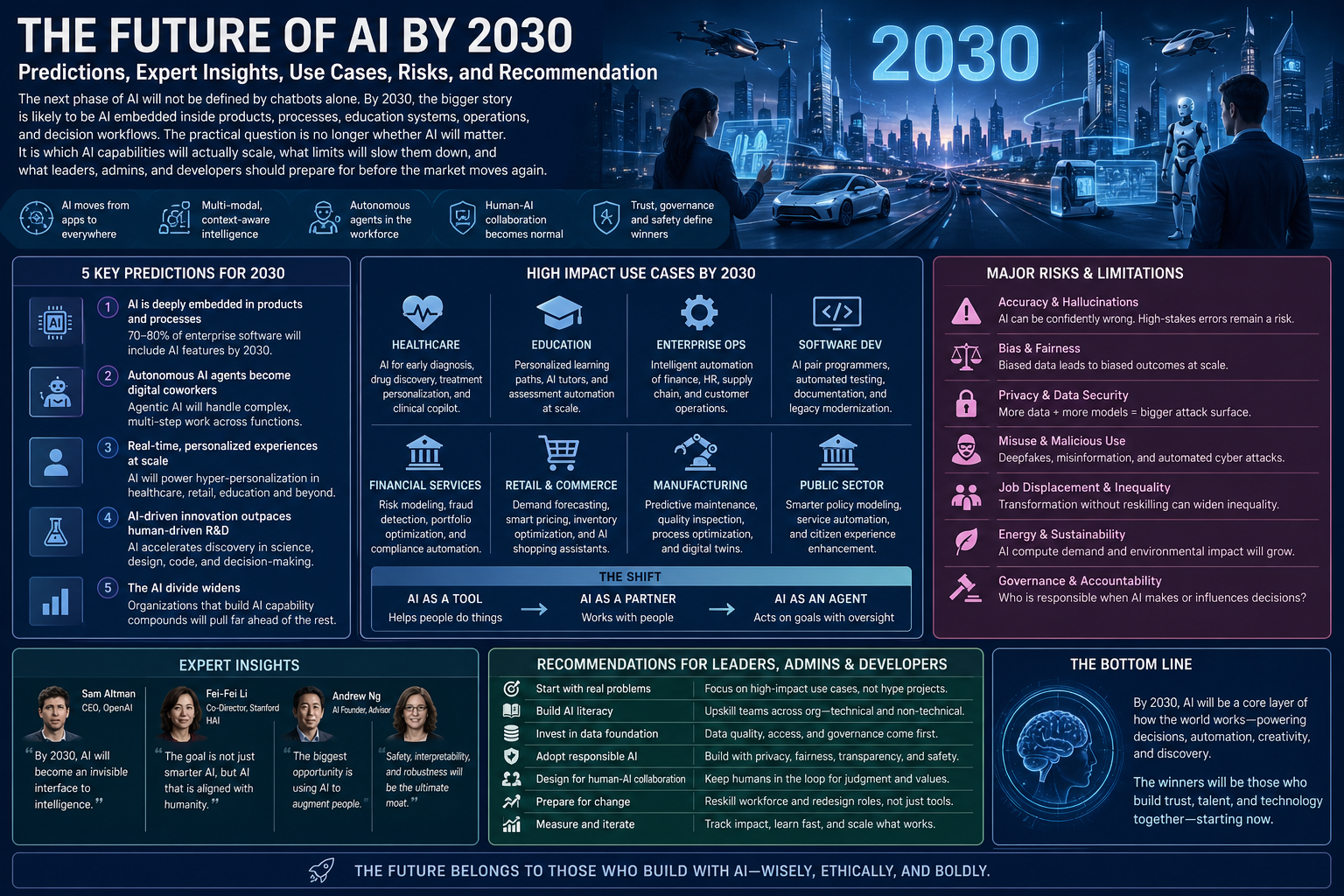

Predictions for AI by 2030

These are the most defensible predictions based on current evidence.

| Prediction | Why it is likely | What it means in practice |

|---|---|---|

| 1. Multimodal AI becomes normal | Model progress is moving beyond text into image, audio, video, and software tasks. | Users will expect one system to read, listen, see, summarize, generate, and act across formats. |

| 2. AI agents move from demos to constrained workflow execution | Enterprise interest in agentic systems is growing, but scale still depends on reliability and process fit. | Agents will handle bounded tasks like research prep, support triage, knowledge retrieval, and internal orchestration before fully autonomous work. |

| 3. AI becomes infrastructure inside software, not only a standalone app | Organizations want AI where work already happens: CRM, service desks, IDEs, ERP, docs, and analytics tools. | The winning products will hide AI inside real workflows instead of forcing people into separate chat windows. |

| 4. Governance becomes a buying requirement | As AI enters sensitive domains, buyers will ask for auditability, data boundaries, evaluation, and policy controls. | Security, privacy, grounding, monitoring, and approval flows become part of core product design. |

| 5. AI changes more jobs than it fully eliminates | ILO, OECD, IMF, and WEF signals all point more toward task and role redesign than universal redundancy. | Teams will spend more time supervising, validating, escalating, and integrating AI-driven outputs. |

| 6. Physical-world AI grows, but slower than software AI | Robotics, autonomy, and edge AI face higher safety, hardware, and environmental complexity. | Warehouses, logistics, manufacturing, inspection, and mobility will advance first in structured environments. |

| 7. Compute, energy, and data quality remain strategic constraints | Better models alone do not remove infrastructure cost, latency, and data governance constraints. | Organizations that prepare clean context, retrieval layers, and strong operating models will outperform those that only buy access to models. |

The biggest mistake is to imagine 2030 AI as one magical general machine that simply replaces organizations. A better mental model is many narrower AI systems embedded in many real processes, each valuable only when the surrounding workflow is designed well.

Practical use cases

These examples show what the future of AI may look like when it actually lands inside day-to-day operations.

From chatbot to resolution workflow

Today many companies stop at FAQ automation. By 2030, stronger systems will classify cases, retrieve policy-aware context, draft a response, propose next best action, summarize the interaction, and hand off to a human with a full recommendation bundle. The human stays accountable, but the AI removes a large amount of repetitive operational work.

From code completion to engineering workflow support

Developers will not only ask AI to write functions. AI will increasingly help with ticket breakdown, code explanation, test suggestion, log summarization, regression detection, migration drafting, and documentation upkeep. That does not remove the need for developers. It increases the value of strong developers who can review, shape, and validate machine-generated work.

From dictation support to process acceleration

In non-diagnostic operational settings, AI can capture notes, structure records, pre-fill documentation, summarize patient interaction history, and flag missing information. The more regulated the environment, the more important audit trails, versioning, role-based access, and human sign-off become.

From generic tutoring to guided learning systems

AI will increasingly help create adaptive explanations, formative quizzes, feedback drafts, accessibility support, and differentiated lesson materials. The real step change comes when schools combine this with policy, teacher training, disclosure rules, and redesigned assessment.

Admin and developer perspective

| Role | What matters most by 2030 | Practical takeaway |

|---|---|---|

| Business admin / IT admin | Access policies, approved tools, data retention, vendor risk, monitoring, and internal rollout discipline. | Do not treat AI as only a feature purchase. Treat it as an operating model decision. |

| Developer / architect | Grounding quality, orchestration, evaluations, guardrails, observability, fallbacks, and human review design. | Most AI failures in production will be systems failures, not only model failures. |

| Operations leader | Workflow redesign, redeployment of staff time, escalation rules, and measurable KPIs. | Value comes from process redesign, not from simply pasting AI on top of a broken workflow. |

Best practices

- Design for bounded autonomy: let AI do more only where the task, inputs, and escalation paths are clear.

- Invest in context quality: retrieval, permissions, source freshness, and structured internal knowledge matter more than hype.

- Measure real outcomes: track resolution time, error rates, adoption, escalation quality, cycle time, and user trust.

- Keep humans in high-stakes loops: healthcare, finance, education, legal, and HR use cases need explicit review patterns.

- Prepare the workforce: train people to supervise, evaluate, and collaborate with AI instead of treating rollout as a secret technical project.

Limitations and risks

Not every bold AI prediction will happen on time. Some capabilities will arrive faster than reliable business adoption. Others will look impressive in demos but fail in messy operational environments.

- Hallucination and inaccuracy: many systems still sound more reliable than they actually are.

- Governance lag: institutions often adopt tools before they define policy.

- Integration friction: legacy systems, permissions, and fragmented data slow real deployment.

- Economic unevenness: not every company will capture value equally, and not every worker will benefit equally.

- Regulatory divergence: what is allowed in one market or industry may be restricted in another.

Recommendation

If you want to prepare for AI by 2030, do not optimize for trend-chasing. Optimize for AI readiness. That means better data boundaries, clearer workflows, stronger internal knowledge systems, deliberate governance, and practical team training.

For most organizations, the right move is to start with high-frequency, medium-risk workflows where AI can help with summarization, drafting, classification, retrieval, and operational coordination. Then expand only after you can evaluate quality and manage failure modes responsibly.

My recommendation: plan for a world where AI is everywhere, but trust is scarce. The organizations that win by 2030 will not be the loudest adopters. They will be the ones that make AI dependable, useful, governable, and economically worth scaling.